The Caption Segmentation Problem Nobody Talks About

"I went to the" / "store yesterday" — why most AI captions break sentences in terrible places, and how we fixed it.

Kevin Li

Here's something that bugged me for months.

You upload a video where someone says: "I went to the grocery store yesterday to buy some eggs."

Most caption tools will break this into something like:

Line 1: "I went to the"

Line 2: "grocery store yesterday"

Line 3: "to buy some eggs"

Read that again. "I went to the" — the what? Your brain has to hold that fragment in working memory until the next line appears. It's like reading a book where someone cut each line with scissors at random intervals.

This is the caption segmentation problem, and almost nobody in the caption tool space talks about it. It is one reason we treat captions as an editing workflow, not just a transcript pasted on top of a video.

Why It's Harder Than It Looks

The naive approach: split every N words. That's what most tools do. Every 3-5 words, new line. Simple, consistent, terrible.

The slightly less naive approach: split at punctuation. Better, but most casual speech doesn't have much punctuation. People say "so I went to the store and I picked up some eggs and then I came home" as one continuous sentence. Where do you split that?

The real answer involves understanding phrase structure. "the grocery store" is a noun phrase — breaking it across lines is like cutting a word in ha-lf. "to buy some eggs" is a purpose clause — it belongs together. "yesterday" modifies "went," not "store," so it should probably stay with the verb.

What We Built

We rebuilt our segmentation algorithm from scratch last month (we called it Smart Segmentation 2.0 internally, which is a terrible name but it stuck).

The core ideas:

Context-aware breaking. We parse the transcript into syntactic chunks — noun phrases, verb phrases, prepositional phrases, named entities. The algorithm never breaks within a chunk. "the grocery store" always stays together. "New York City" always stays together.

Format-aware sizing. This was the insight that changed everything: TikTok captions and YouTube captions need completely different line lengths.

For short-form (under 3 min): 3-6 words per block. Tight, punchy, matches the fast scroll pace. Two or three words on screen at a time. This is what you see on viral TikToks.

For long-form (3+ min): 6-12 words per block, often in two lines. More like traditional subtitles. Readable without demanding attention. This is what works for YouTube videos, online courses, podcasts.

We auto-detect which mode to use based on video duration.

No mid-phrase breaks. This is the rule we enforce above everything else. If the algorithm can't find a clean break point within the target word count, it extends the block rather than cutting a phrase in half. A slightly long caption block is always better than a confusing one.

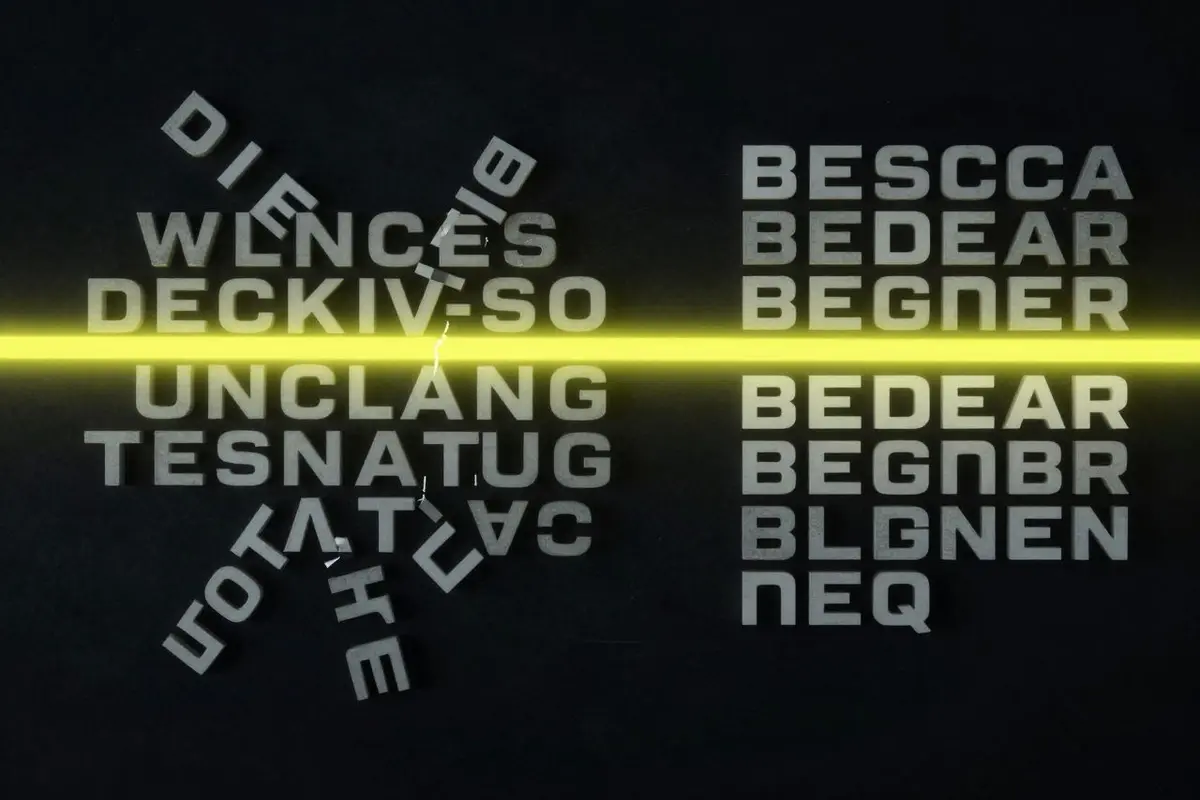

Before / After

Same transcript, old algorithm vs new:

Before:

"So what I've been"

"doing lately is working"

"on this new project"

"that I'm really excited about"

After:

"So what I've been doing lately"

"is working on this new project"

"that I'm really excited about"

The difference looks small in text. In video, with words appearing and disappearing at speech pace, it's night and day. The new version reads naturally. The old version makes you work.

The Uncomfortable Truth

The reason this problem persists in most tools is that it's invisible in demos. When you show a caption tool in a 5-second marketing clip, any segmentation looks fine. It's only when you process a real 60-second video with natural speech patterns that bad breaks become obvious.

We noticed it because we use CaptionBolt for our own content. Every bad break in our own videos drove us slightly more crazy until we finally committed to rebuilding the whole thing.

If you've been using CaptionBolt, the new segmentation is already live. You don't need to do anything — all new videos automatically use the improved algorithm. Go process a video and compare it to something you made a few months ago. The difference should be obvious.

Related Reading

If you want to see the user-facing side of this problem, read how to add subtitles to a video or how to edit SRT files. For hands-on fixes, use the auto subtitle generator and the subtitle editor.